張瑞釗 Chang Reed-Joe

Act well your part for those who love you, and those who don't will start loving you.

ChatGPT轉換器的基本運作原理

Basic Operating Principles of ChatGPT’s Transformer

自2022年11月30日ChatGPT (3.5) 發布以來,各式各樣的生成式人工智慧(Generative AI)及其相關資訊與論述,如被驚動的虎頭蜂,傾巢而出。

任何研究,了解主題是「什麼」,是進一步研究與應用的前提。因此,打鐵趁熱(儘管ChatGPT已面世一年多),在此加入討論的行列,以最簡要的篇幅描述ChatGPT,讓尚未接觸或已熟悉ChatGPT的人,能從不同角度切入,了解什麼是ChatGPT及其基本概念和運作原理。

ChatGPT的誕生

科技與文史是不可分割的。尤其是,熟悉科技的發展脈絡,更有助於具體了解科技的興起背景、內涵及其原理。人工智慧起源於20世紀中期(1950s–1960s),之後和ChatGPT相關的資訊技術,依其概念發展的先後,大致可以分為以下幾個階段:

- 人工智慧 (Artificial Intelligence, AI)

- 早期AI研究 (Early AI Research)

- 機器學習 (Machine Learning)

- 神經網絡 (Neural Networks)

- 自然語言處理 (Natural Language Processing, NLP)

- 深度學習 (Deep Learning)

- 生成式AI (Generative AI)

- 轉換器模型 (Transformer Models)

- 預訓練 (Pre-training)

- 生成式預訓練轉換器 (Generative Pre-trained Transformer, GPT)

- 基於預訓練生成式AI的對話轉換器 —— 生成式預訓練聊天機器人 (ChatGPT – Conversational Transformer Based on Pre-trained Generative AI)

從以上與ChatGPT相關的資訊科技發展脈絡看來,ChatGPT的名稱代表了第7至第10項資訊科技知識的累積;這些科技知識的發展促成了ChatGPT的誕生。

基本概念

Transformer(轉換器)是GPT(Generative Pre-trained Transformer)的核心,而ChatGPT是基於GPT發展的,專為提供與使用者聊天(chat or converse)的模型:Conversational Transformer Based on Pre-trained Generative AI —— 生成式人工智慧預訓練聊天機器人。坊間有些書籍認為GPT即是ChatGPT,甚至有些影片(video)錯誤地將GPT理解為Generative Pre-Training的首字母縮寫(acronym),這些都是不正確的。

由於ChatGPT的部分資料尚未公布,這裡以GPT-3模型為例,描述其96層隱藏層(hidden layers)的神經網絡(neural networks)。其組成可以想像為以下幾個部分:

輸出生成層(output generation layer):最後的輸出是在96層轉換器之後生成的。輸出生成層會將輸入文本(例如一個問題)經由隱藏層一連串的轉換過程中,一字一字(token by token)地生成輸出文本(問題的答案)[註一],再轉換成我們在螢幕上所看到的。

輸入嵌入層(input embeddings layer):整個GPT模型由此輸入層開始,負責將輸入的文本資料(input text)轉換為模型可以處理的數字向量(numerical vectors),稱為嵌入(embeddings)—— 將輸入文本轉換為「詞元表示」(token representation)。

96層的轉換器解碼層(transformer decoder layers):在輸入層之後,資料依次流經96層的轉換器解碼器層。每一層的輸出成為下一層的輸入,並接著轉換為輸出,傳遞至下一層,這個過程稱為「轉換」(transformation)。字(token by token)地生成輸出文本(問題的答案)[註一],再轉換成我們在螢幕上所看到的。

轉換器層(96層)稱為「隱藏層」(hidden layers),因為輸入文本(input text)已轉換成數字表示的詞元表示(token representation),不是人眼可見的。輸入的資料在每經過一層時都會進行一次精煉(refinement),正因為要經過如此多的層次,所以這種學習方式稱為深度學習(deep learning)。

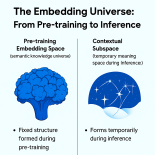

GPT模型的神經網絡,在實際應用於ChatGPT之前,是經過預訓練(pre-trained)的,因此稱為生成式預訓練轉換器。利用大量的、可靠的網路資料和各種文本,讓GPT模型學習知識,在隱藏層中,一層一層地,從最簡單的英文用字、語法、結構、文法,一直到語意及抽象意義的理解(understanding)與生成(generating)。每生成一個字(predict a new token),就回到隱藏層(96層),從頭開始理解所新生成的文本,一直到生成層(generation layer),再生成後面一個新詞元(a newly predicted and generated token),如此反覆,直至整個輸出與輸入文本一致時,即完成訓練[很難了解,對不對]。

ChatGPT即應用所發展完成的GPT模型,以聊天情境(chatting context)進一步微調(fine-tuning)再訓練。實際使用ChatGPT時,同樣地,輸入的序列資料(input sequence)會經過96層的轉換器進行處理,由上而下應用預訓練及微調(pre-trained and fine-tuning)所學得的知識,進行逐層的精煉,這個過程稱為「轉換」(transformation),最後生成輸出文本(output generation)。其過程,大致可分為以下幾個階段:

- 最初的層次(Early layers):負責處理較簡單的用詞(diction)、語法(syntax)和文法(grammar)。

- 中間的層次(Mid-layers):當資料流經這些層次時,模型會進一步處理語義(semantics),即除了句子結構以外,還會從上下文的字詞(words)和片語(phrases)中理解句子的含義。

- 進階的層次(Advanced layers):這些層次處理較廣泛的上下文(context)和其連貫性(coherence),以確保與輸入情境的相關性和一致性。

- 最深的層次(the Deepest layers):最後的層次負責處理抽象概念(abstract concepts)、更細膩的理解(nuanced understanding)和複雜的關係,如幽默、引喻等,並將分散的概念連接起來。

結論

ChatGPT能處理超過90種語言,但其預訓練主要是以英語為主。因此,使用ChatGPT時,以英語進行輸入和輸出可以獲得更穩定的品質。

由於使用英語以外的其他語言預訓練時,其訓練的數據量相對較少,加上語言和文化的差異,非英語的輸入和輸出與使用英語表達相比,可能會有所不同。因此,在使用ChatGPT時,切勿照單全收其回答。

English version for international students

Understanding ChatGPT

English Translation (Edited Version):

Since the release of ChatGPT (3.5) on November 30, 2022, a swarm of discussions and information about generative AI has emerged—like a disturbed nest of hornets.

In any research, understanding what the subject is forms the basis for further exploration and application. Therefore, seizing the momentum (even though ChatGPT has been available for over a year), this article joins the ongoing discussion to briefly introduce ChatGPT. It aims to provide an accessible perspective for both newcomers and those already familiar with ChatGPT to better understand its core concepts and mechanisms.

The Birth of ChatGPT

Technology and the humanities are inseparable. Familiarity with the technological development process helps deepen the understanding of its origin, meaning, and principles. Artificial Intelligence originated in the mid-20th century (1950s–1960s). The technological lineage leading to ChatGPT can roughly be divided into the following stages:

- Artificial Intelligence (AI)

- Early AI Research

- Machine Learning

- Neural Networks

- Natural Language Processing (NLP)

- Deep Learning

- Generative AI

- Transformer Models

- Pre-training

- Generative Pre-trained Transformer (GPT)

- Conversational Transformer Based on Pre-trained Generative AI — ChatGPT

From this lineage, the name ChatGPT reflects the culmination of stages 7 through 10, all of which contributed to its development.

Core Concepts

At the heart of GPT is the Transformer, and ChatGPT is a model built upon GPT, designed specifically for human conversation: Conversational Transformer Based on Pre-trained Generative AI. Some books and videos incorrectly treat GPT as synonymous with ChatGPT or misinterpret GPT as an acronym for “Generative Pre-Training,” which is inaccurate.

Since not all data about ChatGPT has been disclosed, the GPT-3 model is used as a representative example. It consists of a 96-layer neural network with the following main components:

- Input Embeddings Layer: The model begins with this layer, converting input text into numerical vectors that the model can process — known as embeddings or token representations.

- 96 Transformer Decoder Layers: Data passes through 96 layers, with each layer refining the input and passing it to the next — a process called transformation.

- Output Generation Layer: This layer produces the output, generating the response token by token after processing through all 96 layers. The result is what we see on screen.

These 96 layers are called hidden layers because the input has been converted into numerical token representations invisible to the human eye. Each layer refines the information further — hence the term deep learning.

The GPT model is pre-trained, meaning it learns from large-scale, reliable text data on the internet. It learns progressively, from basic vocabulary and grammar to semantics and abstract understanding. After generating each new token, it returns to the hidden layers to reprocess the updated input and continue until the full output is generated — an iterative and intensive process [hard to grasp, isn’t it?].

ChatGPT applies this GPT model and fine-tunes it using conversational data. During actual use, the input sequence passes through the same 96 transformer layers, applying pre-trained and fine-tuned knowledge at each level. This transformation process unfolds in stages:

- Early Layers: Handle basic diction, syntax, and grammar.

- Mid-layers: Process semantics — understanding meaning from surrounding words and phrases.

- Advanced Layers: Ensure context relevance and coherence.

- Deepest Layers: Handle abstract ideas, nuanced meanings, and complex relationships such as humor and metaphor.

Conclusion

ChatGPT supports over 90 languages, but its pre-training was primarily based on English. Using English for both input and output typically yields more reliable results.

Because non-English training data is relatively limited and differences in language and culture exist, outputs in other languages may vary. Therefore, when using ChatGPT, one should never take its responses at face value.